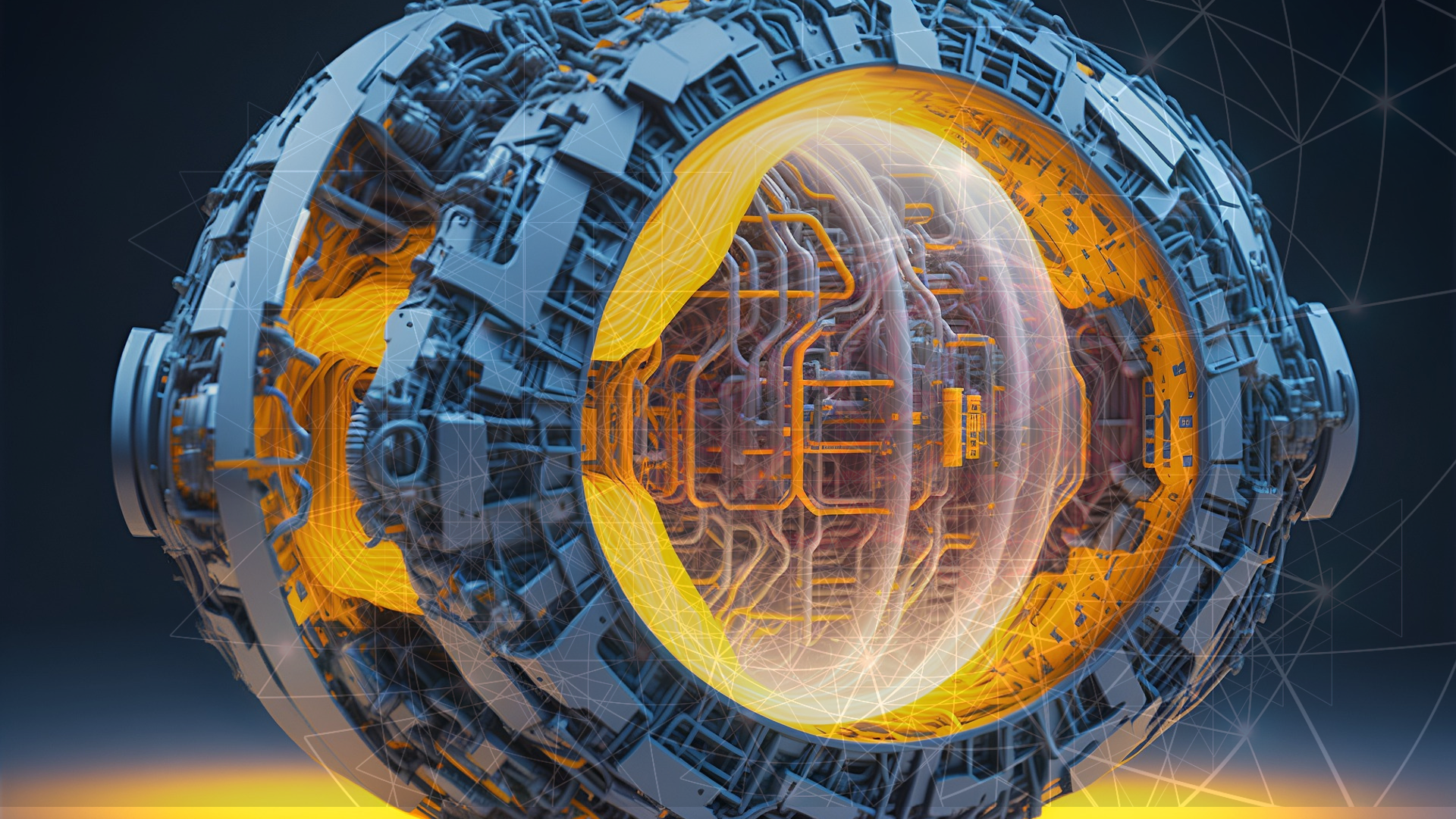

Fusion energy holds immense promise. The goal is to harness the power of the stars to generate clean, limitless energy on Earth. However, achieving this requires overcoming significant challenges. Researchers at Princeton and the Princeton Plasma Physics Laboratory (PPPL) have achieved a breakthrough by using machine learning (ML) to control plasma edge bursts in fusion reactors; this advancement enhances reactor performance while preventing damage.

Achieving a sustained fusion reaction is complex. It requires maintaining a plasma that is dense, hot, and confined long enough for fusion to occur. Yet, as researchers push plasma performance limits, new challenges arise. One major issue is energy bursts escaping from the edge of the plasma. These edge bursts impact performance and damage reactor components over time.

The team has developed a machine learning method to suppress these harmful edge instabilities. They achieved this without sacrificing plasma performance. Their approach optimizes the system’s suppression response in real time, maintaining high performance without edge bursts at different fusion facilities.

The researchers published their findings on May 11 in Nature Communications. They demonstrated their method’s success at the KSTAR tokamak in South Korea and the DIII-D tokamak in San Diego. Each facility has unique operating parameters, yet the machine learning approach achieved strong confinement and high fusion performance without harmful plasma edge bursts.

According to research leader Egemen Kolemen, associate professor of mechanical and aerospace engineering at the Andlinger Center for Energy and the Environment, the team not only demonstrated that their approach could maintain a high-performing plasma without instabilities but also proved its effectiveness at two different facilities.

“We demonstrated that our approach is not just effective – it’s versatile as well,” Kolemen confidently stated.

High-confinement mode in fusion reactors is a promising approach. It involves a steep pressure gradient at the plasma’s edge, offering enhanced plasma confinement. However, this mode historically comes with instabilities at the plasma’s edge. Traditional methods to control these instabilities, like applying magnetic fields, often lead to lower performance.

“We have a way to control these instabilities, but in turn,” said Kolemen, a staff research physicist at PPPL, but “we’ve had to sacrifice performance, which is one of the main motivations for operating in high-confinement mode.”

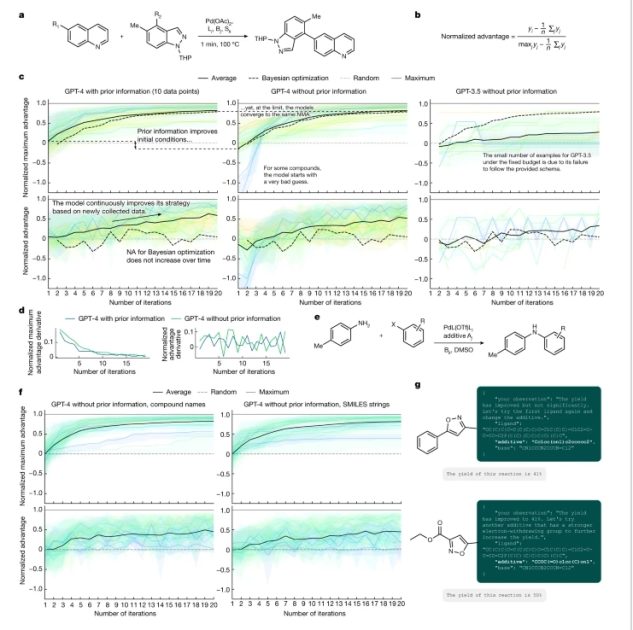

The machine learning model developed by the Princeton-led team reduces computation time from tens of seconds to milliseconds. “With our machine learning surrogate model, we reduced the calculation time of a code that we wanted to use by orders of magnitude,” claimed Shousha, co-first author Ricardo Shousha, a postdoctoral researcher at PPPL and former graduate student in Kolemen’s group.

This enables real-time optimization. The model monitors the plasma’s status and adjusts magnetic perturbations as needed. This balance between edge burst suppression and high fusion performance is achieved without sacrificing one for the other.

Fusion reactors like KSTAR and DIII-D have shown that this machine learning approach is robust and versatile. The team is now refining their model for future reactors like ITER (Latin for “the way” and originally an acronym for “International Thermonuclear Experimental Reactor”), currently under construction in southern France. One area of focus is enhancing the model’s predictive capabilities to recognize precursors to harmful instabilities, avoiding edge bursts entirely.

This ML approach represents a significant breakthrough in fusion energy research. It addresses one of the main challenges in developing fusion power as a clean energy resource. The ability to control plasma edge bursts in real time without compromising performance is a game-changer.

“These machine learning approaches have unlocked new ways of approaching these well-known fusion challenges,” said Kolemen.

Fusion reactors rely on maintaining a high-performing plasma. Traditional physics-based optimization methods are computationally intense and time-consuming. They can’t keep up with the millisecond changes in plasma behavior. This machine learning method overcomes that limitation.

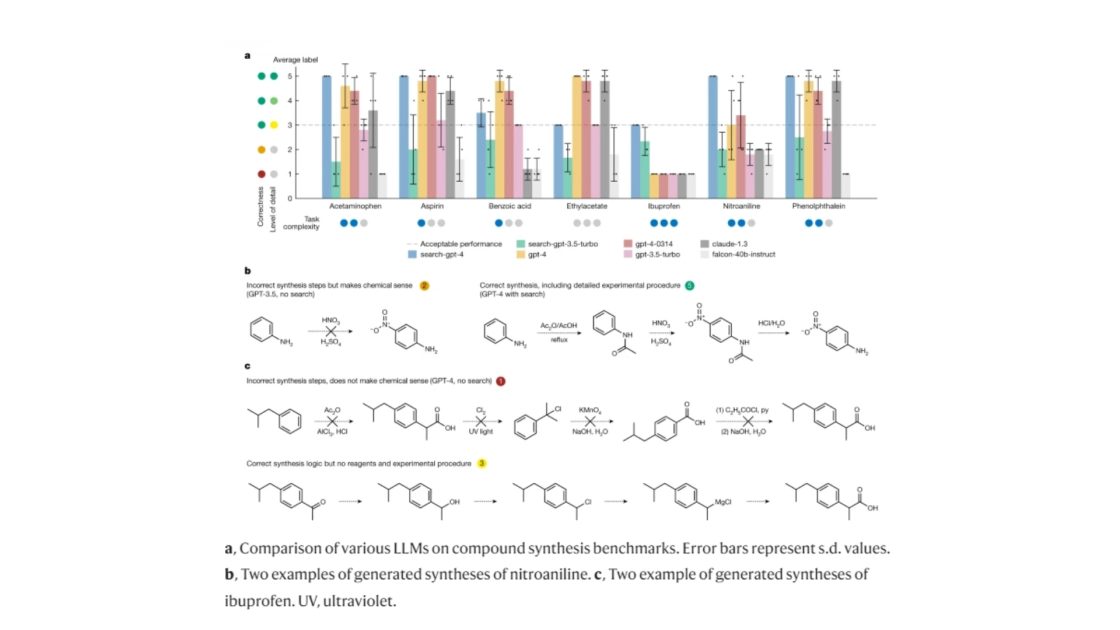

The Princeton team’s model uses a fully connected multi-layer perceptron (MLP) driven by nine inputs. These include the total plasma current, edge safety factor, and plasma elongation, among others. The outputs determine the coil current distribution across the top, middle, and bottom 3D coils.

The researchers used simulations from 8490 KSTAR equilibria to train the model. This approach predicts the optimal 3D coil setup that minimizes core perturbations and ensures safe edge burst suppression. Real-time adaptability is crucial for achieving reliable edge burst suppression in reactors.

Maintaining thermal and energetic particle confinements is essential for high fusion performance. However, undesired perturbed fields in the core region, caused by RMPs, affect fast ion confinement. The machine learning approach minimizes these negative impacts by enabling edge burst suppression with very low RMP amplitudes.

Enhanced RMP-hysteresis and rotation increase observed in experiments offer promising aspects for future fusion devices. These improvements enable ELM suppression with minimal RMP amplitudes, reducing negative impacts on core confinements. This adaptive scheme makes achieving high fusion products in future devices more favorable.

The team’s method has shown remarkable performance boosts. In the DIII-D tokamak, for instance, the method achieved over a 90% increase in performance from the initial standard ELM-suppressed state. This enhancement isn’t solely due to adaptive RMP control but also to self-consistent plasma rotation evolution.

The team’s innovative integration of the ML algorithm with RMP control enables fully automated 3D-field optimization and ELM-free operation. This approach is compatible with plasma operation that satisfies ELM suppression conditions. It’s a robust strategy for achieving stable edge burst suppression in long-pulse scenarios.

Future fusion reactors like ITER face challenges due to metallic walls, which can introduce core instabilities from impurity accumulation. Adaptive control can mitigate these issues by optimizing RMPs to reduce impurity accumulation while preserving high plasma confinement.

Remaining features need enhancement to achieve fully adaptive RMP optimization over entire discharges in future devices. Current strategies rely on ELM detection during optimization, which isn’t ideal for fusion reactors. Identifying and responding to ELM precursors in real time is crucial for complete ELM-free optimization.

Importantly, the breakthrough from Princeton’s machine learning approach lies in its ability to significantly improve fusion reactor performance while controlling edge bursts. This progress will help us move toward practical and economically sustainable fusion energy.