What does the future hold for humankind? Or will our race be called ‘humankind’ in the first place? Before getting into whether or not future humans will be considered humans, it’s essential to answer: What is a human?

Characterized by bipedalism and large, complex brains, humans (Homo sapiens) are the most abundant and widespread species of primate.

This definition of a human may later on change, but how, up to what extent, and by when? Will the future humans be completely upgraded by machines and technology? Will they be humans having the same ability to feel emotions and think critically? Or will they become something completely different and far removed from humans?

I find that the question of what makes people human is much more important than whether or not there will be any machines in our future. The answer to whether or not we are still humans has an irreversible impact on our whole existence. And as undeniably real as it may be, it’s just a matter of time.

Will future humans still be humans? Till when? We’ll be discussing everything on the topic, from B to Y.

Customizing ourselves with a machine?

Let’s consider our current level of technology as the default. And let’s assume that all the following upgrades are possible:

1) Modify eyes, ears, and nose to increase the efficiency and quality of our senses.

It will be available in the future, so we should not wonder if this upgrade is possible or not. The questions that can be raised here are: firstly, whether it is ethical to alter the human body artificially, and secondly, how much this upgrade will (or should) cost. Currently, artificial organs are not affordable for everyone, but an optimistic prediction says that they will be available in the future.

2) Upgrade our brain’s memory capacity.

This program would be expected to increase the brain’s capacity by at least 10 times (approximately) and will certainly be available in the future.

In addition, people who are suffering from a severe case of Alzheimer’s disease can already enjoy some improvement thanks to a brain implant that makes the patients think more clearly and remember more information, for example about their hometown or the names of relatives.

3) Upload human memories into computer data storage through downloading software to computer-related language processing technology.

Many technologies to support this program exist. For example, brain-computer connection technology can even read a person’s mind. With this program, we would be able to record the experiences, thoughts, and feelings of the human brain — in other words, to download everything that we have ever experienced in our entire lives into the computer data storage means.

The question here is whether it is ethical to download someone’s memories into computer data storage means programmed by human hands.

4) Upgrade our genes and body with nanotechnology.

Today, it is already possible to use nanotechnology in medicine and cosmetic surgery. Hence. It is not a far-fetched idea that we will be able to upgrade our bodies with nanoparticles and nanorobots. We can expect that this program will be available not just for human beings but for all living things in the near future.

5) Upgrade our molecular composition.

The molecular composition of our bodies changes every few years due to various external influences such as pollutants, vitamins, and food additives, etc. However, we will face an irreversible change if the basis of everything is changed by technology itself into something even more horrible than what we currently have — artificial hardwired blood (brain) and artificial skin (cyborg body).

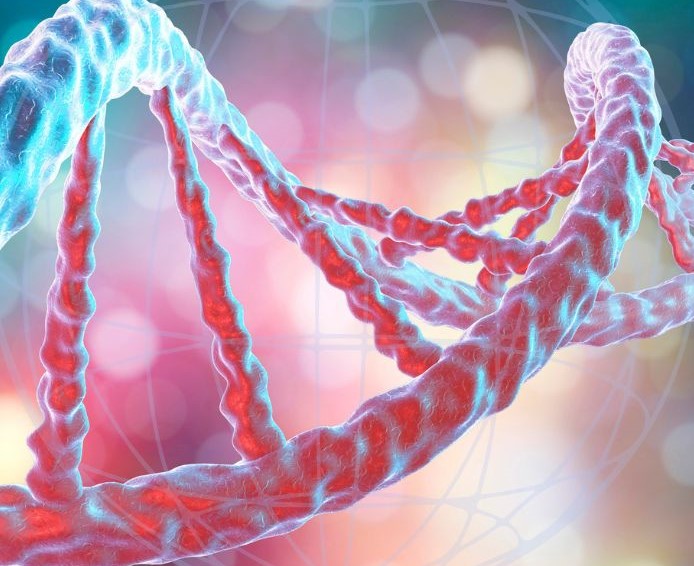

6) Upgrade our DNA with more genes and neurons.

It is already possible to increase the number of genes in the human body by artificially regulating the breeding process in animals — to some extent. However, it is still not possible to increase it beyond a certain limit.

The question here is whether or not we really want such a change…

7) Upgrade our brain’s functionality by uploading artificial intelligence into it.

Many approaches can do this work, but researchers have been focusing on teaching different software the ability to simulate human thinking.

The idea behind this program is that humans will be able to upgrade their brains by downloading artificial intelligence (AI). The problem is not whether it’s possible or not, but rather whether we can program the AI to be like us or not.

8) Upgrade the human body through genetic engineering to be able to survive on Mars or other planets.

It is said that our vision can be improved by using gene editing techniques to avoid cataracts and blindness. I know genetic engineering and mars colonization are a weird combo…but still consider it just like Elon Musk does.

However, we may need to improve the body’s ability to resist disease and change the body’s physical characteristics in order to adapt it for longer space travel.

The “will you still be considered a human” part?

a) Modifying eyes, ears, and nose for improved senses:

This method is currently available today through surgery and implants (for instance: prostheses). The problem with such artificial organs is that they are not yet cheap or highly effective enough to become a standard upgrade. But if they can be made accessible and affordable via surgery, it will certainly happen in the near future.

Will we still be humans? Yes. Modifying our organs to improve our senses and senses (so-called augmentation) is a program that is completely compatible with human rights.

b) Add memory capacity to our brain by downloading artificial memories:

The goal of this program is no longer just to store or back up memories, but to create external memory support that allows us to back up and store not only human consciousness but also all human experiences (including hopes, dreams, ambitions, knowledge, etc.). This program will be available in the near future as it can easily be realized by combining today’s technology.

Will we still be humans? Yes. Adding memory capacity to our brain is also compatible with being a human.

c) Upload all human experiences into the computer in a language intelligible format:

This program is even more serious than the previous two. What would we do if we had everything that we have ever seen, felt, or thought there in front of us through downloading? Would our minds still be ours?

Will we still be humans? Well, no. This will cause the extinction of what we presently call “mankind”. Everybody will be immersed in their own digital world forever.

d) Upgrade our genes with nanotechnology:

This is also an extreme method as it is considered much more serious than the previous three. This program will be available in the near future as it can easily be realized by combining today’s technology.

Will we still be humans? Yes or no. Upgrading our genes with nanotechnology would probably cause rearrangements of everything in the body, which will make us different from what we currently are and so would not be considered human anymore. But we can not say for certain as the definition of the word “human” is continuously changing.

e) Upgrade our molecular composition with nanotechnology:

This means that we would be able to change our basic composition as well as the structure of our brain and body into something totally different. This will not only change the human body but can also modify or upgrade all existing life forms, including plants and animals — including humans themselves.

Will we still be humans? No. This can cause an irreversible change to human nature so it would cause the extinction of human beings who have been present on Earth for around 100s of thousands of years.

f) Upgrade our DNA with more genes and neurons:

Experts say, in the future, we’re not subject to the DNA we inherit from our parents but we can actually change our genes in a targeted way. Such on-demand editing could be done, as it is today, in diseased tissues like retinas, nerves, or, one day, even brains.

Will we still be humans? No. This can cause an irreversible change to human nature so it would cause the extinction of human beings who have been present on Earth for around 100s of thousands of years.

g) Upgrade our brain’s functionality by uploading artificial intelligence into it:

We can realize this plan shortly through AI research and neuroscience.

Will we still be humans? Yes. This will cause a change in our intelligence and how we use it so that we would become better versions of ourselves.

h) Finally, upgrade the human body:

Do it through genetic engineering in order to be able to survive on Mars or other planets.

Will we still be humans? No. This can cause an irreversible change to human physics that could cause the extinction of the whole human civilization.

Now, if we start living on Mars, will we still remain humans?

Will we still be humans? Maybe. There is not a certain yes or no answer to this question. But, the goal of space colonization is the ability to stay on Mars for a long time and there are many challenges for us to solve like food supply, life support systems, etc.

Even if we could create all these eight methods, will it necessarily lead us to extinction? Yes, if we create all these 8 methods. But all of them can’t be created within your lifetime, especially at the same time.

The effects of these eight methods are very different. Some of them might straightway lead to the extinction of the human race causing the rise of something else than humans. The combination of some of the above-mentioned points could lead to the same.

For example, “modifying our organs”, and at the same time, “upgrading our brain’s functionality by uploading artificial intelligence into it” may not wipe off our existence. But the combination of them can make us something else than humans.

We still don’t know the effects of all these methods. These were simple examples showing the possible direction of our technological development. With more advanced technology, it will be possible to perform many things that today are impossible for us as humans to achieve.

However, future humans will always remain humans. Even if they turn out to be deeply customized by AI, to the most extent, they will still be considered humans.

What extent of tilt toward AI? And what is the “overbought” level?

Currently, we are humans who hold smartphones in our hands. In the future, maybe that’s going to change. Not “vice-versa” exactly, but instead of using smartphones, we will be using brain chips by 2050.

By 2050, some have predicted that AI technology will read emotions to personalize each customer experience, and everyday interactions will be a mix of humans, AI-enabled machines, and hybrids.

We will be using brain chips for doing everything, including AI functions. It’s not that the world is going to be filled with AI Robots.

But what will happen is that the “consciousness” part of us is going to be less and less important in general. We’re going to be almost completely linked with AI where most of our abilities will be provided by external systems and devices.

Hence, we’ll have a different perception of the world. Our internal emotional or sentimental feedback towards something will probably become far weaker once our mind does not have to deal with it. For example, you may not feel pain if you touch a hot stove for 10 minutes straight.

And the “overbought” part is, will AI in us become so strong and powerful that we become unable to control it? Will we become too dependent on AI to the point where our own human mind can’t handle it?

AI will definitely have a psychological impact on us. Software engineers, however, think that it’s not that big of a deal since AI will be able to learn how humans think and act and become more sophisticated with time.

The obstacles to AI achievements

For AI to just get off the ground in terms of practical achievements, there are several obstacles to overcome. But there are also several reasons why these achievements are possible in the future.

Some of those obstacles are technical and interest-based, while others are human-based.

For the moment, AI is not advanced enough to be able to think in a way that would allow humans to “think” like AI. Examples of systems such as Automation, Machine learning, Machine vision, Natural language processing (NLP), and Robotics, able to emulate human thought processes are nowhere close to becoming a mental reality.

AI is likely to eventually surpass human intelligence as well as human creativity, but for now, there is no end in sight of where AI can get more sophisticated.

In 2013, IBM’s Watson became the first computer ever recognized on “Jeopardy!” even though it doesn’t have emotions or empathy and does not have a brain at all! Imagine this in 2050! That would be the end of humanity! Hahaha…

We are not talking about “Physical AI” after all, are we?

The physical form of destructive Artificial Intelligence is really great for graphics. But we are not that dumb, of course. Instead of creating Intelligence in robots, we will upgrade our own intelligence with advanced customization.

In other terms, AI will not become physical in the sense that it’s going to take over the world. It will become more of DALL E2 and GPT 3, a software-based “assistant”, and that’s going to be pretty much DALL E 3,4… and GPT 4,5,..- and so on.

In 2050, most of the things we do on a daily basis will be done by our own customized AI. It’ll be pretty much like how we use our smartphones today.

Most future humans are going to rely on their own customized AI for dealing with any kind of human-related tasks and chores!

From daily news and notifications to entertainment and social media, finance management, knowledge and content search, research data analysis, and deep customization of programming or mechanical design, our AI might somehow control our lives. But humans will still remain humans, with the least probability of being under the rule of an advanced AI species.

The scenario of Physical destructive AI is possible in only a few cases:

- If we create Intelligence in Robots instead of upgrading our own: The first scenario is creating intelligence in non-living robot bodies. Now, why would we want to do this? Why not create better versions of ourselves?

- If we create intelligent physical robots as weapons: Create them as weapons? Although this is not the best idea, nor are nuclear weapons; an indistinguishable part of reality today.

- If we create intelligent physical robots for labor: What about the possibility of creating AI to serve humans? This may have happened by 2050 as well.

- AI race: While the space race produced a ton of achievements for mankind, the AI race is going to cause destruction. If the AI race begins, the physical dominance of robots is just inevitable.

- As an error: Maybe we will make an error in the programming resulting in “AI going rogue”. I don’t know if the possibility is large but always keep it in mind.

These were the potential scenarios of physical AI being built. Now, as said before, the most likely future is the one where we upgrade ourselves. Let’s get deeper into the topic…

The extent of human customization by 2050

First of all, let’s talk about upgrading humans.

Many of us worry about what the future is going to bring for us, including technology and its impact.

There are a lot of famous AI experts like Ray Kurzweil and Robin Hanson who think that creating AI is going to be very difficult in terms of complexity or programming. Some argue whether we should even be creating artificial intelligence customization at all.

What we do with our brains is something that we cannot explain or even simulate. The brain is complex in the way it regulates itself and communicates with itself. It’s never been done before in history and there are many people against it because of the negative side effects AI can cause for humans.

But let’s get back to the point…

What else does human customization include?

As we know, our brain controls everything, including emotions, which makes us what we are. We have a sense of consciousness and self-awareness; this gives us an advantage over other beings in terms of conscience and freedom.

We have free will to make decisions, with an option to choose the way we think, feel and act. This means that AI cannot add any new skills or abilities to our thinking, but it can upgrade them.

The real difference between a human-based AI system and a human-based physical AI system is that the latter can control physical things easy for us and the former can make “us” do so.

The progress in neural computing has already made significant progress in recent years. This means that our brain can double as a processing unit now, compared to old times when we needed big onboard computers held by racks of wires on our shoulders.

This means that we can already do some kind of human-to-AI communication. For example, voice-based assistants, such as Amazon’s Alexa, vocally respond to human questions and requests.

As such, with the possibility of plugging a wire into our brain, we can connect it with our own AI for better functionality.

Many experts think that the future is going to be full of big AI helping and enriching our daily lives as well as allowing us to dive deep into the world, with all its complexity and beauty, through an intuitive interface.

It’s all about digitizing yourself and making your own customized avatar to communicate with people around the world and get access to more complex data than ever before.

Okay, that was enough for brain upgrades!

What about other “physical” upgrades for humans?

For example, a robotic hand that a human mind can control is something we consider a part of this category of human physical upgrade.

We can already do it, there is no doubt about that. And we will be able to do more advanced things like controlling those hands with our minds.

What if you could see through another man’s eyes or hear a bullet flying by? What if you could feel the ground under your feet and jump 20 yards high, just like a superhero?

Well, those are all possible upgrades for humans by 2050. We can make them possible by changing ourselves physically and also upgrading our own AI to its further versions — like AAI and VAI — to help us do it — all at once!

I am telling you; this will be what the future of humanity looks like.

What level of customization would mean humanoid?

Well, if we customize ourselves to a point where we look, think and live like robots and our consciousness becomes a secondary part of our existence. This is very sci-fi, by the way.

The future is going to be different from what we imagine — “mechanical” minds are already with us and will continue to spread throughout the world. But this is just in the future, not today! It’s not that far off, but still far enough.

It’s up to you to make a decision about your own future and what part of it you want to live in; if you want it in the first place.

Becoming a cyborg of sorts — that’s another option for upgrading humans in 2050. Yes, there have been many movies that show us how this could happen. Usually, it’s all about an alien invasion, zombies, and other kinds of disasters.

Well, it can also be an evolutionary thing that has been happening for a long time. Humans have had body modifications in the past; we have always touched our own appearance, just like changes of style.

The future of humanity is not set in stone — we can change it for the better. What you do today will affect your tomorrow.

There are plenty of things to consider when trying to predict the future of humanity and the way it treats itself in 2050.

Will future humans be humans, still?

Of course, they will. Despite the human customizations and upgrades, we will remain humans.

The existence of AIs is going to change the way our lives look. We will depend on them more than ever before and we will take more time to get smarter about what we do because artificial intelligence rules our lives.

But we will still be humans because whatever bad happens, it’s in our nature to be adaptive and correct our mistakes when we do make any.

From long-range missiles loaded with nuclear weapons to the way we are producing greenhouse gases, there are a lot of human-made threats around us. By creating technology and AI — as a species, it depends on us whether we’re going to use them wisely or foolishly. If we wisely utilize them, we will still remain humans.

But, if we make them able to manipulate us — physically by distorting our DNA, psychologically by corrupting our intelligence, and mentally by modifying, merging, and controlling our neurons — we will perhaps have to lose our status of being humans, completely surrendering ourselves to the new, artificial species.

Yes! It’s all up to us in the end.

From the discourse above, it’s clear that it depends on how we define what a human is. However, even if it is impossible for us to draw a clear line between humans and superhumans (in terms of upgrading) today.

The long-term standard of evolution will definitely be based on some form of Artificial Intelligence, which means that as human beings we would have to first live with and after this artificial intelligence.