The United Nations has unanimously adopted the first global resolution on artificial intelligence. The resolution on AI is a big deal in how it is managed. It’s all about using AI for the greater good, with a special group set up to advise on how to govern AI worldwide. While the first UN-adopted resolution has sought to address various critical aspects of AI development, it has fallen short of embodying a truly futuristic approach that adequately anticipates and navigates the complexities of AI’s impact on society.

Reaffirming the UN’s commitment to international law, human rights, and sustainable development goals (SDGs), the preamble of the resolution reads: “Seizing the opportunities of safe, secure and trustworthy artificial intelligence systems for sustainable development The General Assembly, Reaffirming international law, in particular the Charter of the United Nations, and recalling the Universal Declaration of Human Rights, . . .”

The historical in international document has also acknowledged previous resolutions and declarations concerning technology and human rights.

Recognizing opportunities and risks

The long-awaited resolution has pointed out that AI possesses both positive and negative aspects. On one hand, AI can accelerate progress towards Sustainable Development Goals (SDGs) by addressing global issues like poverty, health, food security, climate change, energy, and education.

However, the resolution has also acknowledges the risks associated with AI if not designed and deployed properly. These risks include spreading misinformation, amplifying biases, violating privacy, and the potential for AI manipulation.

To address these concerns, the resolution has given emphasis to the importance of developing safe, secure, and trustworthy AI systems. It has also presented the necessity for AI development to adhere to international laws and human rights principles.

“Emphasizes that . . . Member States and, where applicable, other stakeholders to refrain from or cease the use of artificial intelligence systems that are impossible to operate in compliance with international human rights law or that pose undue risks to the enjoyment of human rights, especially of those who are in vulnerable situations, and reaffirms that the same rights that people have offline must also be protected online, including throughout the life cycle of artificial intelligence systems,” the resolution affirms.

This ensures that AI is used responsibly, safeguarding individuals and society from potential harm.

Bridging divides and promoting inclusivity

The UN-adopted resolution’s focus on narrowing digital gaps deserves admiration. It acknowledges the differences in technological progress, where developed nations lead in AI advancements while developing countries often lag behind. This gap in digital access can worsen social and economic disparities.

In response, the resolution highlights the importance of helping developing nations build their technological capabilities.

“. . . and providing support for the mitigation of potential negative consequences for workforces, especially in developing countries, in particular the least developed countries, and fostering programmes aimed at digital training, capacity building, supporting innovation and enhancing access to benefits of artificial intelligence systems,” the resolution states.

Enhancing engineering expertise in these countries, for instance, is crucial for sustainable development and better infrastructure. Collaborating with international organizations and NGOs can provide valuable support in terms of knowledge, funding, and technical assistance.

Furthermore, the resolution emphasizes the need for inclusive governance in AI development. It stresses the importance of considering the needs and capacities of both developed and developing countries. While developed nations may have more resources and expertise in AI, developing countries may face unique challenges requiring tailored solutions.

Promoting ethical AI practices

The UN resolution strongly underscores the importance of ethical considerations in the development of AI. Ensuring that AI systems are built and used responsibly is critical. It’s crucial to design AI systems in a way that promotes fairness, avoids discrimination, and enhances accessibility for example.

Respecting human rights is a core part of these ethical considerations. Since AI has the potential to significantly impact human life, it’s vital to develop and employ AI systems while respecting and upholding human rights.

Preserving privacy is another essential aspect of ethical AI development. AI often deals with sensitive data, so it’s important to handle this information responsibly to safeguard individuals’ privacy. Practices like maintaining good data practices and using representative data sets can help protect privacy.

Addressing biases is also crucial in AI development. Biases in AI systems can result in unfair or discriminatory outcomes. Therefore, it’s important to identify and mitigate these biases during the AI development process.

The resolution encourages the adoption of regulatory frameworks and governance approaches that support responsible AI innovation. “Encourages . . .academia and research institutions and technical communities, to provide and promote fair, open, inclusive and non-discriminatory business environment, . . . as well as encourages Member States to develop policies and regulations to promote competition in safe, secure and trustworthy artificial intelligence systems and related technologies . . . ,” the resolution explains.

These frameworks and approaches can help ensure that AI systems are developed and used responsibly, while also minimizing potential risks.

Transparency, accountability, and human oversight are emphasized throughout the AI life cycle. Transparency ensures that the workings of AI systems are clear and understandable. Accountability ensures that AI systems and their outcomes are fair and justifiable. Human oversight ensures that humans retain control over AI systems throughout their life cycle.

The resolution has aimed to realistically address concerns related to algorithmic discrimination and privacy infringement. Algorithmic discrimination can occur when AI systems contribute to unjustified differential treatment based on certain characteristics. Privacy infringement can occur when AI systems misuse or mishandle sensitive data.

Utilizing data for sustainable development

The resolution recognizes the crucial role of data in AI systems. AI’s exceptional ability to utilize data makes it an invaluable asset for promoting sustainable development, as stated by the resolution.

The UN-adopted resolution reads: “Resolves to promote safe, secure and trustworthy artificial intelligence systems to accelerate progress towards the full realization of the 2030 Agenda for Sustainable Development. . .”

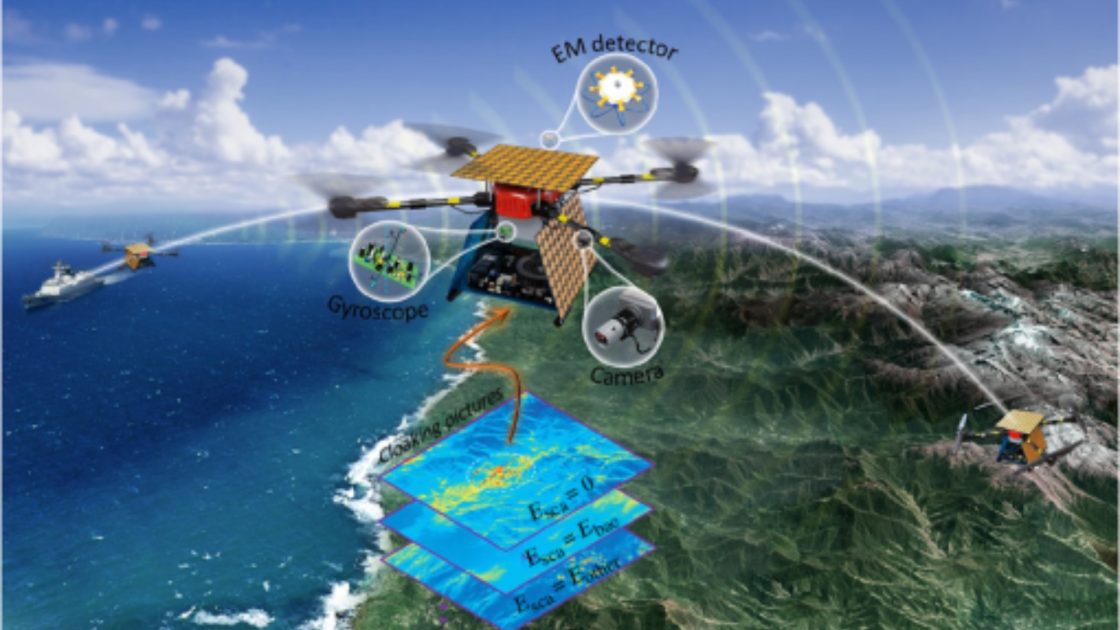

Take, for instance, its capacity to provide analytical insights for biodiversity projects such as those focused on coral reefs.

Moreover, the resolution underscores the significance of fair, inclusive, and efficient data management practices. This involves establishing standardized procedures for collecting, storing, and utilizing data across the organization, defining protocols for data classification and security based on sensitivity levels, implementing processes to maintain data accuracy and consistency, and enacting policies to manage data throughout its lifecycle.

In the resolution, there’s also a call for international collaboration and support to enhance data infrastructure and accessibility. For instance, organizations like the International Telecommunication Union (ITU) are fully dedicated to assisting member states in implementing ICT accessibility policies worldwide, ensuring equitable inclusion in digital societies, economies, and environments regardless of age, gender, ability, or location.

Furthermore, the resolution advocates for trusted cross-border data flows. The challenge lies in creating a global digital framework that facilitates data movement across borders while ensuring appropriate oversight and protection, a principle termed ‘data free flow with trust’ (DFFT).

By advocating for inclusive and consistent data governance practices, the resolution seeks to harness AI’s potential for sustainable development responsibly. This approach ensures that AI development and usage prioritize the well-being of individuals and society, guarding against potential harm.

Looking towards the future

In fact, the resolution provides a thorough overview of the current challenges and opportunities presented by AI. It covers important areas like inclusivity, ethics, and data governance. However, it doesn’t fully embrace a futuristic approach to governing AI.

One of its shortcomings is the absence of a clear roadmap for dealing with rapidly emerging AI technologies and their potential impacts on society. For instance, the resolution doesn’t adequately tackle the regulatory challenges posed by both general and specific AI tools, nor does it address issues such as misinformation, deepfakes, and surveillance in depth.

Additionally, the resolution could benefit from stronger mechanisms for monitoring and adapting to the rapid pace of technological advancements. Effective AI governance should involve continuous monitoring from the inception of a technology to its implementation and beyond. This includes anticipating and addressing unintended consequences and existential risks promptly and effectively.

Resolution receives positive reception

The United States led the resolution, with support from over 120 other Member States. It passed unanimously, without any objections.

Many in the AI industry welcomed the resolution. Brad Smith, Microsoft’s Vice Chair and President, expressed full support saying, “We fully support the @UN’s adoption of the comprehensive AI resolution. The consensus reached today marks a critical step towards establishing international guardrails for the ethical and sustainable development of AI, ensuring this technology serves the needs of everyone.”

“The United States also welcomes the UN General Assembly’s adoption of a resolution setting out principles for the deployment and use of artificial intelligence (AI),” Vice President Harris said in a statement.

China and Russia, along with over 120 member nations, co-sponsored the resolution. The UK, another co-sponsor, has already shown interest in AI regulation. Based on the National AI Strategy and the Science and Technology Framework, they have adopted a pro-innovation approach to AI regulation, aiming to create a proportionate, future-proof, and pro-innovation framework.