Great news for those lacking vocal cords!

Researchers have just come up with a new throat patch that empowers speech for individuals without the use of traditional vocal cords. This machine learning-assisted wearable sensing-actuation system, described in a study published in Nature Communications on 12 March, translates muscle movements into speech without the need for conventional vocal cord function.

The new device is a flexible patch that attaches to the neck and can convert muscle movements into speech. Essentially, you can communicate without relying on your vocal cords!

But here’s the really clever part: this patch not only senses throat movements associated with speech, but it also uses those movements to generate its own power. That means no need for batteries or charging!

This incredible device has offered hope for individuals struggling with voice issues due to conditions like damaged or paralyzed vocal cords, such as those in recovery from throat cancer surgery.

Lead researcher Jun Chen, from the University of California, Los Angeles, got the idea after experiencing vocal strain during several hours of lecturing sessions, as reported by Live Science. He then began to imagine a way to solve this problem, to make it possible for a person to speak without using their vocal cords, also known as “vocal folds.”

Motivated by this idea, Chen and his team worked hard to create a flexible patch that could help people who cannot speak or are recovering from a temporary vocal issue.

The patch, which sticks onto the neck, senses throat muscle movements related to speech and turns them into electricity. What’s impressive is it works without needing batteries, making it easy to use every day.

Made of five thin layers, including soft, flexible silicon and tiny magnets inside silicon, this patch creates electrical signals when your throat muscles move. Then, a clever computer program turns these signals into speech.

A machine learning-assisted wearable sensing-actuation system could enable speech for individuals without vocal cords. Image Credit: Agencies/Jun Chen/University of California, LA

In a demonstration of the innovative tech, eight individuals without speech difficulties tested an algorithm’s ability to translate electrical impulses from a patch into speech.

The algorithm performed impressively, achieving around 95% accuracy in converting these impulses into understandable speech. Participants uttered phrases like “Merry Christmas” and “I hope your experiments are going well” while stationary, walking, and running.

Ziyuan Che, the lead author of the study from the University of California, Los Angeles, reported to AFP that certain words, such as ‘make’ and the name ‘Mark,’ which involve similar movements of throat muscles, could pose challenges for the patch in distinguishing between them.

“But those two words usually appear in a long sentence like ‘I am going to make dinner,’ or ‘How you doing Mark?’,” Che added.

Furthermore, in separate tests, participants were asked to either speak the sentences aloud or silently articulate them. Results showed that the algorithm effectively interpreted muscle movements in both scenarios, consistently generating the correct waveforms.

But, its testing was limited to just the eight people saying a few phrases, and it has yet to be tested in people with speech disorders, as Chen said.

Another limitation, as Chen said, is that the current manufacturing process for the patch would need to be scaled up and made more efficient for a large number of patches to be produced.

Nearly a third of people suffer at least one voice disorder in their lifetime, according to the study.

This discovery, which is a simple, user-friendly option compared to current devices, could change how people with voice problems communicate.

However, Che believed that more advanced algorithms would allow the patch to translate larynx muscle movements “without the need of pre-recording the voice signals”. And he also cautioned it would be years before the prototype could potentially be used by patients.

As of December, approximately 1 in 5 Americans surveyed has reported experiencing a voice disorder.

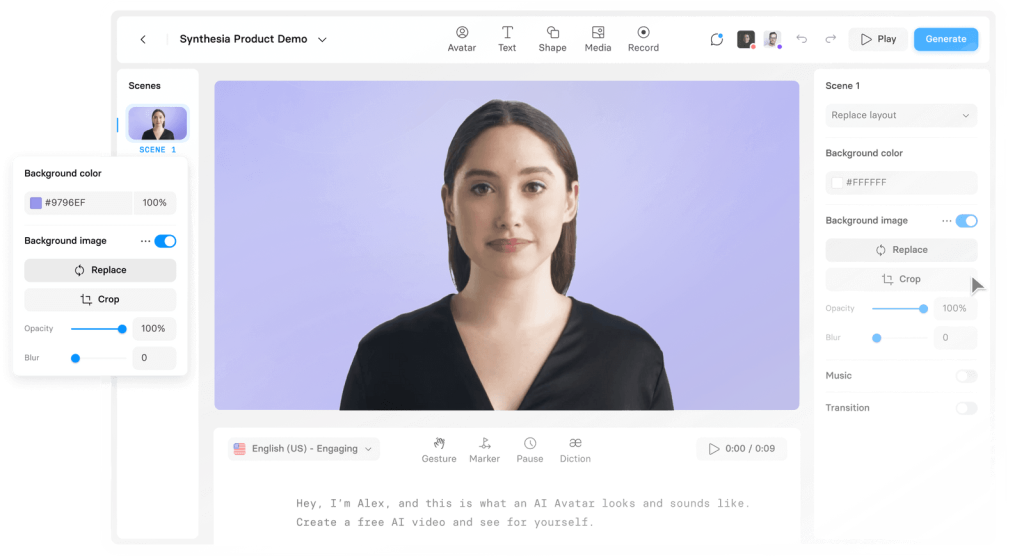

Create a Speaking Avatar here: