Invention seldom takes place as planned. British pharmacist John Walker, who in 1827 accidentally ignited a coated stick while experimenting with chemicals. Walker’s chance discovery prompted advancements in matchstick technology. In the same way, new research led by Penn State engineers has uncovered remarkable properties of brochosomes, tiny particles secreted and coated by leafhoppers, inspiring a rise in innovation in next-generation technology devices.

Leafhoppers have long puzzled scientists with the way they use their brochosomes. These particles, resembling miniature soccer balls with hollow interiors, were first observed in the 1950s. By replicating the complex geometry of brochosomes, the researchers have now revealed their ability to absorb both visible and ultraviolet (UV) light.

This is the first time “we are able to make the exact geometry of the natural brochosome,” Wong said, explaining that the researchers were able to create scaled synthetic replicas of the brochosome structures by using advanced 3D-printing technology.

How did they figure this out?

The team made a larger version of brochosomes, about 20,000 nanometers in size, using advanced 3D printing. They carefully copied the shape, structure, and pore arrangement of these particles to study them closely.

Using a Micro-Fourier transform infrared (FTIR) spectrometer, they examined how brochosomes interact with different types of infrared light. This helped them understand how these particles manipulate light.

In the future, the researchers said they have planned to improve the production process of synthetic brochosomes to match the size of natural ones more closely. They also aim to explore other uses for synthetic brochosomes, like in encryption systems where data can only be seen under specific light conditions.

Replicating intricate brochosomes geometry

The key to unlocking the potential of brochosomes lies in their precise geometry. Despite being known for decades, replicating brochosomes in the lab has been a tough challenge due to their intricate structure.

Wang’s team overcame this hurdle using two-photon polymerization 3D printing method, producing synthetic brochosomes with remarkable optical properties. These faux brochosomes, while larger in scale, closely mimic the size and morphology of their natural counterparts.

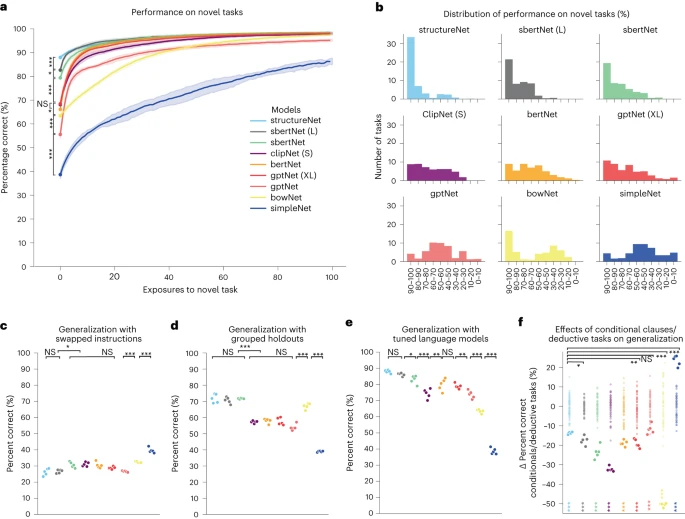

Leafhopper and its brochosomes. (A) An optical image of a leafhopper Gyponana serpenta. (B) A scanning electron microscopy (SEM) image of the leafhopper wing (highlighted area in panel A). (C and D) SEM images of brochosomes on the leafhopper wing, revealing their hollow buckyball-like geometry. (E) An SEM image showing the cross-section of a natural brochosome cleaved by the focused ion beam (FIB) technique. (F) The relationship between the diameter of brochosome through-holes and the diameter of brochosomes across different leafhopper species. Brochosome diameter and hole diameter were determined from our experimental measurements and a literature source (18). The fitted dashed line indicates that the through-hole diameters are approximately 28% of the corresponding brochosome diameters. Description/Image Credit: pnas.org

The consistency in brochosome geometry across leafhopper species is particularly intriguing. Regardless of the insect’s body size, brochosomes maintain a uniform diameter and pore size. This uniformity suggests an evolutionary advantage, enabling leafhoppers to effectively manipulate light to evade predators. By absorbing UV light and scattering visible light, brochosomes create an anti-reflective shield, reducing the insect’s visibility to UV-sensitive predators like birds and reptiles.

Moreover, the densely packed arrangement of brochosomes on leafhopper wings further enhances their anti-reflective properties. Through careful experimentation and analysis, the researchers demonstrated how brochosomes minimize light reflection through both Mie scattering and through-hole absorption effects. These findings provide a physical basis for understanding leafhopper behavior and evolution.

Importance of this approach

The implications of this discovery are far-reaching, according to the researchers. Mimicking nature’s design, bioinspired optical materials could revolutionize various fields, from invisible cloaking devices to more efficient solar energy harvesting.

Lin Wang, the lead author of the study, highlights the potential for thermal invisibility cloaks based on leafhopper-inspired technology. By regulating light reflection, these devices could obscure thermal signatures, offering applications in military stealth or even consumer products.

“Nature has been a good teacher for scientists to develop novel advanced materials,” Wang said. “In this study, we have just focused on one insect species, but there are many more amazing insects out there that are waiting for material scientists to study, and they may be able to help us solve various engineering problems. They are not just bugs; they are inspirations.”

Stealth tech takes inspiration from backyard insect for invisibility innovation

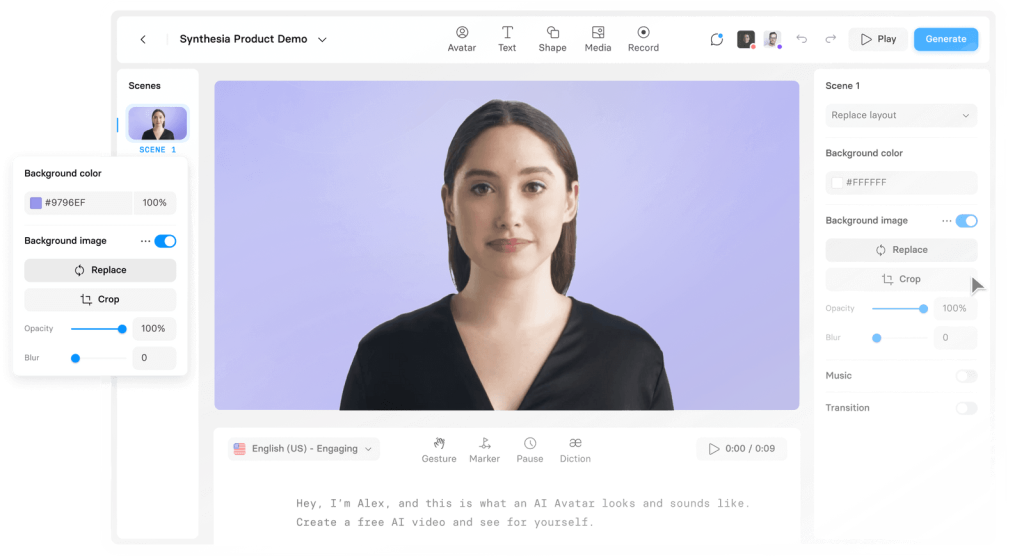

Inspired by leafhoppers, common insects found in backyards, researchers have started to develop a new generation of invisibility devices. Early this year, Chinese scientists from Zhejiang University introduced a game-changing technology called the ‘Guardian of Drone’: an intelligent aero amphibious invisibility cloak.

As reported in Advanced Photonics in January 12, this drone smoothly integrates perception, decision-making, and execution functionalities. The key breakthrough lies in the manipulation of tunable metasurfaces, enabling precise control over scattering patterns across various spatial and frequency domains through spatiotemporal modulation.

Still there are challenges to overcome in increasing the production of synthetic brochosomes and exploring their further applications. Their future research will focus on improving how we make them and finding new ways to use them, Wang said.