Extensive discussions surround how artificial intelligence (AI) and machine learning (ML) are revolutionizing various sectors, including personal computing. The emergence of AI-powered PCs has generated considerable excitement. These systems offer improved performance and innovative capabilities.

However, is this emerging trend genuinely innovative, or merely an exaggerated marketing tactic? This article will examine the current state of AI PCs, their real-world effects, and future outlook.

AI-Powered PCs

AI-powered PCs are designed to incorporate advanced neural processing units (NPUs) alongside traditional processors and graphics cards. The aim is to enable these machines to perform AI and machine learning tasks more efficiently, directly on the device rather than relying on cloud computing. This design holds the potential for improved performance, faster processing, and better energy efficiency for AI-related applications.

NPUs are specialized processors intended to handle AI-specific tasks. While GPUs (graphics processing units) can also perform these tasks, NPUs are optimized for efficiency, making them ideal for laptops where power consumption and battery life are critical. However, as of now, NPUs are still in the developmental phase and cannot fully replace GPUs in all tasks.

Intel and AMD have introduced NPUs in their latest processor lines, such as Intel’s Core Ultra and AMD’s Ryzen 8000G. These processors aim to enhance AI performance for applications like video calling effects, AI-driven document processing, and more. Nevertheless, these NPUs still lag behind the performance expectations set by industry standards. For instance, Intel’s current NPUs reportedly fall short of the 40 trillion operations per second (TOPS) required for optimal performance in certain AI tasks.

Current Market Adoption and Performance

The AI PC market is expanding, with significant shipments reported. According to Canalys, AI PCs accounted for 14% of all personal computer shipments in the second quarter of 2024.

Apple leads this market with its M-series chips, which feature neural engines capable of performing AI tasks. Microsoft has also made strides with its Copilot+ AI PCs, integrating Qualcomm’s Snapdragon PC chips with NPUs.

Despite these advances, the overall performance and utility of AI PCs remain mixed. Intel’s push into the AI PC market has seen some success, with the Core Ultra processors offering over 100 AI experiences. However, real-world applications and user benefits are still limited. The AI features in these PCs, such as improved multitasking and enhanced security, are in their early stages. For instance, the integration of AI into everyday tasks like email management and data analysis is promising but has yet to reach its full potential.

Hurdles in AI PC Expansion

Despite the hype, several significant challenges hinder the widespread adoption of AI PCs. One major issue is the lack of substantial integration between AI hardware and software.

For instance, Microsoft’s Copilot, a key feature advertised with AI PCs, currently operates in the cloud rather than utilizing the local NPU hardware. This results in slower performance and less efficient task handling, undermining the benefits of having an NPU in the device.

Moreover, the current software ecosystem does not fully utilize the capabilities of NPUs. Most AI applications are still designed to run on cloud servers, making the specialized hardware in AI PCs less impactful. This situation is further exacerbated by the slow pace at which developers are adopting and optimizing their applications for NPU technology.

Another challenge is the high cost of AI PCs. As they incorporate cutting-edge technology, these machines are often priced higher than traditional PCs. This elevated pricing can be a barrier for many consumers, limiting the market for AI-powered devices.

Future Prospects and Progress

The future of AI PCs holds immense potential, yet it requires significant upgrades.

Intel CEO Pat Gelsinger announced at Computex that by 2028, 80% of PCs are projected to be AI-driven, with Intel at the forefront, having already shipped over 8 million PCs featuring its Intel Core Ultra chip since December.

According to Gartner’s late 2023 research report, more than 80% of enterprises are expected to adopt some form of generative AI by 2026.

Many anticipate that the forthcoming release of Windows 12, expected in 2025, will play a crucial role in shaping the future of AI PCs. The release is expected to integrate AI capabilities more deeply, potentially unlocking new features and functionalities for AI PCs.

This upgrade could address some of the current limitations and provide a clearer picture of the true potential of AI-powered devices.

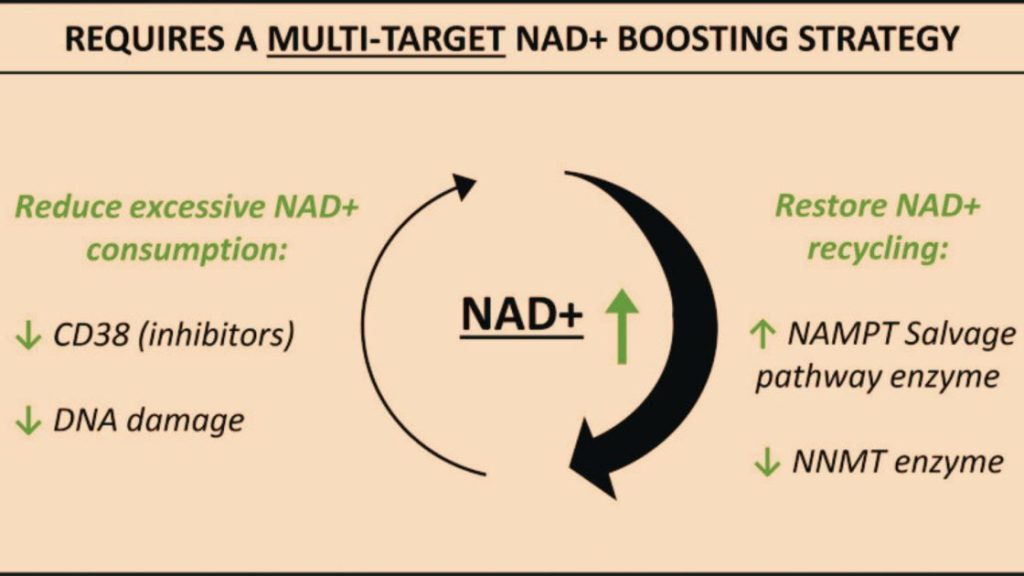

Intel and other chipmakers are also working on next-generation NPUs with higher performance metrics. They share the common objectives of overcoming current limitations and offering more valuable benefits for AI applications.

As AI technology evolves, the role of NPUs in enhancing productivity and efficiency will become more evident as they become more powerful and integrated into everyday computing tasks.