Chapter 1: Fundamentals

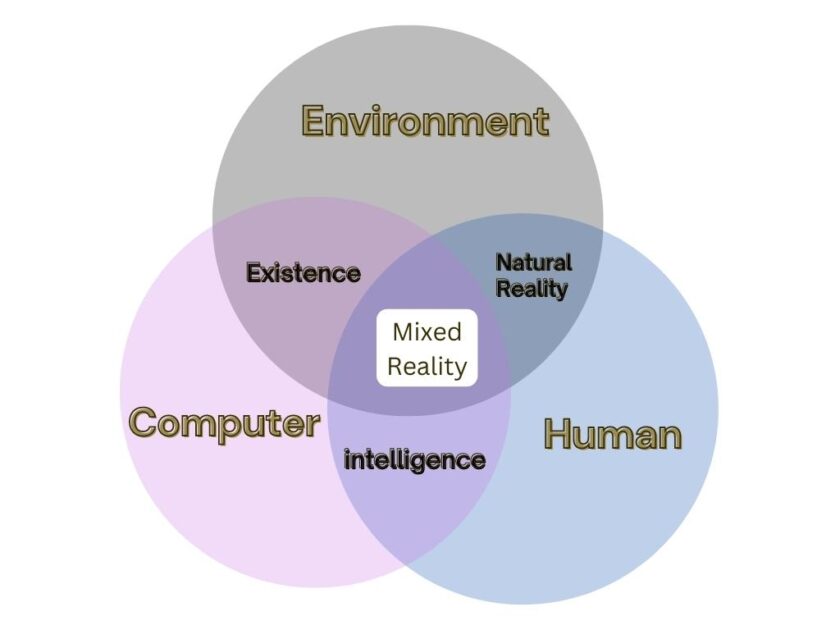

This is how mixed reality works:

Mixed Reality combines real and digital worlds to create new experiences. In the physical world, we know of 118 elements. Some of them are essential for life, such as oxygen, nitrogen, and carbon. In the virtual world, too, there are different sets of elements. We have not named them yet, but they exist. As Mixed reality combines the real and virtual elements, it overlaps a number of elements from both sides. Here is the definition of mixed reality in form of a Venn diagram:

Something that exists inside mixed reality has to first exist physically. So, something that is digital is firstly physical, then digital. Even digital elements are a part of the physical world, they just exist in a different form. For example, in the physical world, we have the objects we interact with, such as trees, rocks, and buildings. In the virtual world, we create digital versions of these objects, such as 3-D models of trees, rocks, and buildings. By overlapping these two elements, we get digital objects that exist in both the physical and virtual worlds. This is what a mixed reality experience is.

Chapter 2: MR Disrupting Digital Devices and VR

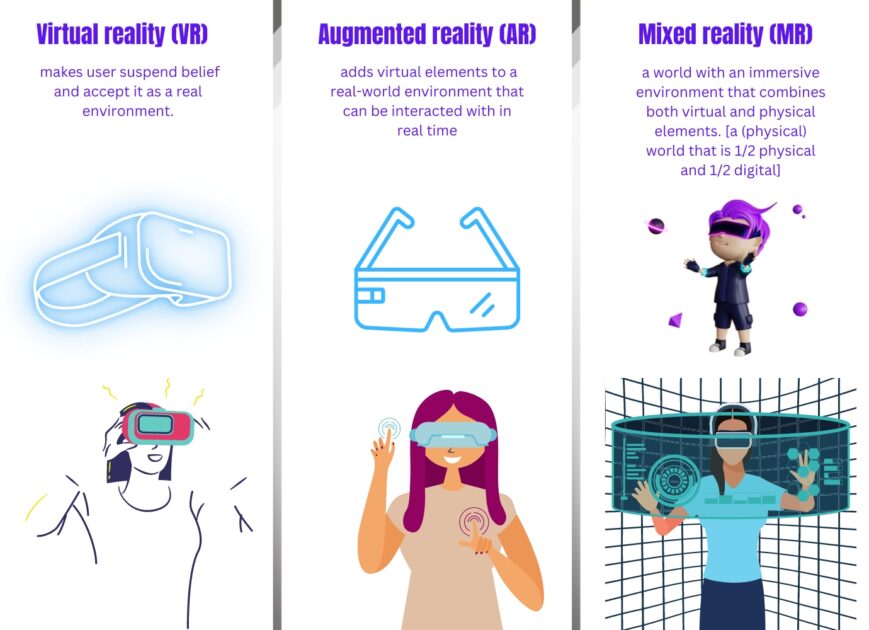

Mixed reality is about replacing smartphones and PC as our digital partners. We currently spend most of our time on screens anyways. Mixed reality is a better side-by-side alternative to it. According to a 2017 study, 48% of people are only used-to to digital devices, but do not think that technology has really made a positive difference in the past 50 years. Mixed reality technology could help reduce that number by more than half. For anyone who wants to take a break from reality, choose something like this instead of VR platforms like Oculus. With Mixed Reality, you can enjoy an enhanced visual experience that is real. The way Mixed reality is disrupting touch-screen smartphones, but also surpassing VR, especially as VR is still new; fantastic.

Mixed reality technology is estimated to increase customer engagement by up to 37% by 2030. In fact, 86% of the buyers are willing to pay more for a better customer experience. And mixed reality offers far better customer services than any other practical alternative, or at least for 73% of the buyers. There is no reason why companies would build VR headsets for gaming, instead of building Mixed reality platforms, where customers actually convert. 80% of customers who used augmented technology said that it helps them to understand a product better. If they understand it better, they simply buy more.

Mixed Reality replaces:

| Field | Replace | With |

|---|---|---|

| Education | Textbooks | Interactive 3D world |

| Healthcare | In-person visits | Virtual medical visits |

| Gaming | Traditional video games | Immersive gaming experiences |

| Retail | Brick and mortar stores | Virtual or augmented showrooms |

| Design | 2D drawings | 3D models and simulations |

| Marketing | Print and digital ads | Interactive experiences |

| Manufacturing | Analog machines | Digital workflows |

| Entertainment | Traditional movies | Immersive storytelling |

| Tourism | In-person visits | Virtual tours |

| Real Estate | Site visits | Virtual showrooms |

Chapter 3: Mixed Reality Glasses

One good thing about mixed reality is its glasses. They are actual glasses and not headsets like VR. Augmented reality and mixed reality are not the same, but we can use the terms “AR headset”, and “MR headset” synonymously. That’s because mixed reality is all about using augmented glasses to blend the physical and virtual worlds. Mixed reality glasses are 5-9 times lighter than VR headsets. Have a look at these Neal Air Glasses from Amazon:

Pretty awesome, ain’t it? However, before buying any mixed reality glasses, look at the authenticity. Nreal Air glasses are TÜV Rheinland Group-certified for low blue light, flicker-free, and eye comfort. Unauthentic glasses can cause eye strain and headaches. That, of course, is what you don’t want Mixed Reality as. There are enough headaches in the real life. For best 3D viewing, turn on Mixed Reality Viewer mode and be ready to enter an alternate reality. This mode supports object tracking, allowing users to interact with virtual objects in 3D.

A more detail-oriented person may not be pleased with the quality of any mixed reality glasses. And for them, apple is going to release one the following year. Yes, we have made a separate article for that, make sure to check it out.

Chapter 4: Engineering

The engineering of mixed reality works like this: create, capture, analyze, process, and display data.

The most advanced MR headsets feature a combination of optical displays and motion tracking sensors. The displays are typically LCD or OLED screens that allow for a wide field of view, while the motion tracking sensors allow for precise tracking of the user’s head and hand movements. In addition, many MR headsets also include specialized audio and haptic feedback systems, allowing users to feel vibrations or other tactile feedback. In order to create a truly immersive and interactive experience, mixed reality headsets must be ergonomic and comfortable. This includes lightweight materials and designs, as well as adjustable straps and padding to ensure a secure and comfortable fit. Mixed reality headset creators also pay special attention to temperature control, as they can generate a significant amount of heat while in use. A powerful graphics card is certainly important. The internal cooling system prevents overheating and maximizes user comfort.

Chapter 5: Mixed Reality Economy

In mixed reality, the economy changes its norms. Buying and selling are different in mixed reality than in the normal, real world. Not only that you use digital currencies to pay, but you also buy digital assets. As the worlds of virtual and physical reality become increasingly intertwined, new markets are born. Companies now offer digital goods, services, and experiences to consumers. Consumers can purchase virtual assets such as digital collectibles, virtual goods, and virtual currencies. One can also buy, trade, and earn digital goods and services within the new economy. Businesses in the mixed reality economy are already using crypto as payment for goods and services. The average cost of creating a product is $30,000. Mixed reality reduces that by up to 20% as it lowers the cost of prototyping and testing. This helps businesses increase profitability and reach more customers; and in the long term, create better products.

Chapter 6: Privacy

Privacy is a concern for initial adopters of mixed reality. Privacy works by first understanding the data being collected as mixed reality blends a lot of things. Sensors, cameras and other devices track user info and movements. User consent is necessary. Data is then encrypted and stored securely. Data can be shared with third parties, but user control is still the key. As the overall field of wearable technology matures, privacy concerns are completely surrounding these fields. In fact, it is now a priority for the makers of such technology. Mixed reality privacy is enforced by having user control options, data encryption, and secure storage. Currently, almost one-fourth of the US population uses AR at least once a month. As more people get used-to to mixed reality, the concern for privacy will reduce. That’s just like the way people accepted the internet and its risks.

Chapter 7: Mixed reality software

We talked enough about the hardware part of mixed reality. But what about the software, the actual brain of the body, the most important part? MR software enables immersive experiences with a proper combo of specialized software, hardware, and AI algorithms. Cameras capture user movements and generate 3D models. These models interact with virtual objects. Simultaneously, audio and visual feedback are provided. This helps create an interactive and engaging experience. AI algorithms allow for real-time interactions, creating a more realistic environment. The user can interact with the virtual environment, also making it more enjoyable. MR software is used in gaming, training, and other applications. It will continue to evolve, creating more immersive and engaging experiences.

Now, how does the software work? Different sub-fields of AI play a key role in Mixed reality software.

Image recognition algorithms identify objects:

```

import cv2

import numpy as np

img = cv2.imread('image.jpg')

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY)

sift = cv2.xfeatures2d.SIFT_create()

kp = sift.detect(gray,None)

img=cv2.drawKeypoints(gray,kp,img)

cv2.imwrite('sift_keypoints.jpg',img)

```Natural language processing helps interaction:

```

import nltk

from nltk.stem.lancaster import LancasterStemmer

stemmer = LancasterStemmer()

import numpy

import tflearn

import tensorflow

import random

import json

with open("intents.json") as file:

data = json.load(file)

try:

with open("data.pickle", "rb") as f:

words, labels, training, output = pickle.load(f)

except:

words = []

labels = []

docs_x = []

docs_y = []

for intent in data["intents"]:

for pattern in intent["patterns"]:

wrds = nltk.word_tokenize(pattern)

words.extend(wrds)

docs_x.append(wrds)

docs_y.append(intent["tag"])

if intent["tag"] not in labels:

labels.append(intent["tag"])

words = [stemmer.stem(w.lower()) for w in words if w != "?"]

words = sorted(list(set(words)))

labels = sorted(labels)

training = []

output = []

out_empty = [0 for _ in range(len(labels))]

for x, doc in enumerate(docs_x):

bag = []

wrds = [stemmer.stem(w.lower()) for w in doc]

for w in words:

if w in wrds:

bag.append(1)

else:

bag.append(0)

output_row = out_empty[:]

output_row[labels.index(docs_y[x])] = 1

training.append(bag)

output.append(output_row)

training = numpy.array(training)

output = numpy.array(output)

with open("data.pickle", "wb") as f:

pickle.dump((words, labels, training, output), f)

tensorflow.reset_default_graph()

net = tflearn.input_data(shape=[None, len(training[0])])

net = tflearn.fully_connected(net, 8)

net = tflearn.fully_connected(net, 8)

net = tflearn.fully_connected(net, len(output[0]), activation="softmax")

net = tflearn.regression(net)

model = tflearn.DNN(net)

try:

model.load("model.tflearn")

except:

model.fit(training, output, n_epoch=1000, batch_size=8, show_metric=True)

model.save("model.tflearn")

def bag_of_words(s, words):

bag = [0 for _ in range(len(words))]

s_words = nltk.word_tokenize(s)

s_words = [stemmer.stem(word.lower()) for word in s_words]

for se in s_words:

for i, w in enumerate(words):

if w == se:

bag[i] = 1

return numpy.array(bag)

def chat():

print("Start talking with the bot (type quit to stop)!")

while True:

inp = input("You: ")

if inp.lower() == "quit":

break

results = model.predict([bag_of_words(inp, words)])

results_index = numpy.argmax(results)

tag = labels[results_index]

for tg in data["intents"]:

if tg['tag'] == tag:

responses = tg['responses']

print(random.choice(responses))

chat()

```Machine learning algorithms improve the user experience:

```

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

%matplotlib inline

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.metrics import classification_report,confusion_matrix

df = pd.read_csv('Classified Data',index_col=0)

df.head()

scaler = StandardScaler()

scaler.fit(df.drop('TARGET CLASS',axis=1))

scaled_features = scaler.transform(df.drop('TARGET CLASS',axis=1))

df_feat = pd.DataFrame(scaled_features,columns=df.columns[:-1])

df_feat.head()

X = df_feat

y = df['TARGET CLASS']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.30, random_state=101)

knn = KNeighborsClassifier(n_neighbors=1)

knn.fit(X_train,y_train)

pred = knn.predict(X_test)

print(confusion_matrix(y_test,pred))

print(classification_report(y_test,pred))

error_rate = []

for i in range(1,40):

knn = KNeighborsClassifier(n_neighbors=i)

knn.fit(X_train,y_train)

pred_i = knn.predict(X_test)

error_rate.append(np.mean(pred_i != y_test))

plt.figure(figsize=(10,6))

plt.plot(range(1,40),error_rate,color='blue', linestyle='dashed', marker='o',

markerfacecolor='red', markersize=10)

plt.title('Error Rate vs. K Value')

plt.xlabel('K')

plt.ylabel('Error Rate')

knn = KNeighborsClassifier(n_neighbors=17)

knn.fit(X_train,y_train)

pred = knn.predict(X_test)

print('WITH K=17')

print('\n')

print(confusion_matrix(y_test,pred))

print('\n')

print(classification_report(y_test,pred))

```For a mixed reality system to operate properly, all three of these elements need to be in sync. Together, they enable the application to recognize and interact with the world around it. For example, it might recognize a chair, understand your command to sit down, and then take the appropriate action. If true mixed reality is achieved, the software needs to be able to understand the environment and the user’s needs. Apart from the above three, this requires additional elements such as 3D mapping, motion capturing, and facial recognition. Smartphones and other devices are already using facial recognition and motion capture, and 3D mapping is a technology in development.

3-D modeling for mixed reality using Unity engine

public class MixedRealityController : MonoBehaviour

{

// Reference to the camera in the scene

public Camera camera;

// Reference to the 3D object in the scene

public GameObject 3DModel;

// Reference to the MixedRealityToolkit object

public MixedRealityToolkit mrt;

void Start()

{

// Initialize the MixedRealityToolkit

mrt.Initialize();

// Create a 3D model in the scene

GameObject model = GameObject.Instantiate(3DModel);

// Position the 3D model in the scene

model.transform.position = camera.transform.position + camera.transform.forward * 3f;

// Set the scale of the 3D model

model.transform.localScale = new Vector3(1f, 1f, 1f);

// Set the rotation of the 3D model

model.transform.rotation = camera.transform.rotation;

}

}

```The above example is of a script that creates a 3D model in the scene, positions it in the scene, and sets its scale and rotation according to the camera position and orientation. This is a key step in creating mixed reality applications. Other steps that are needed include setting up an appropriate lighting system, adding colliders, and setting up the appropriate interactions for the user.

Conclusion

Yes, it is a great idea, but only as long as privacy is a priority. Other than that, mixed reality is pretty much, disrupting smartphones, VR, and reality itself. Especially with the help of evolutionary algorithms, and data-driven simulations, mixed reality is here to evolve; not only to stay. We can never overlook the hardware potential of this technology either. Sharp graphics, smooth movements, and an immersive experience all make reality better when mixed.